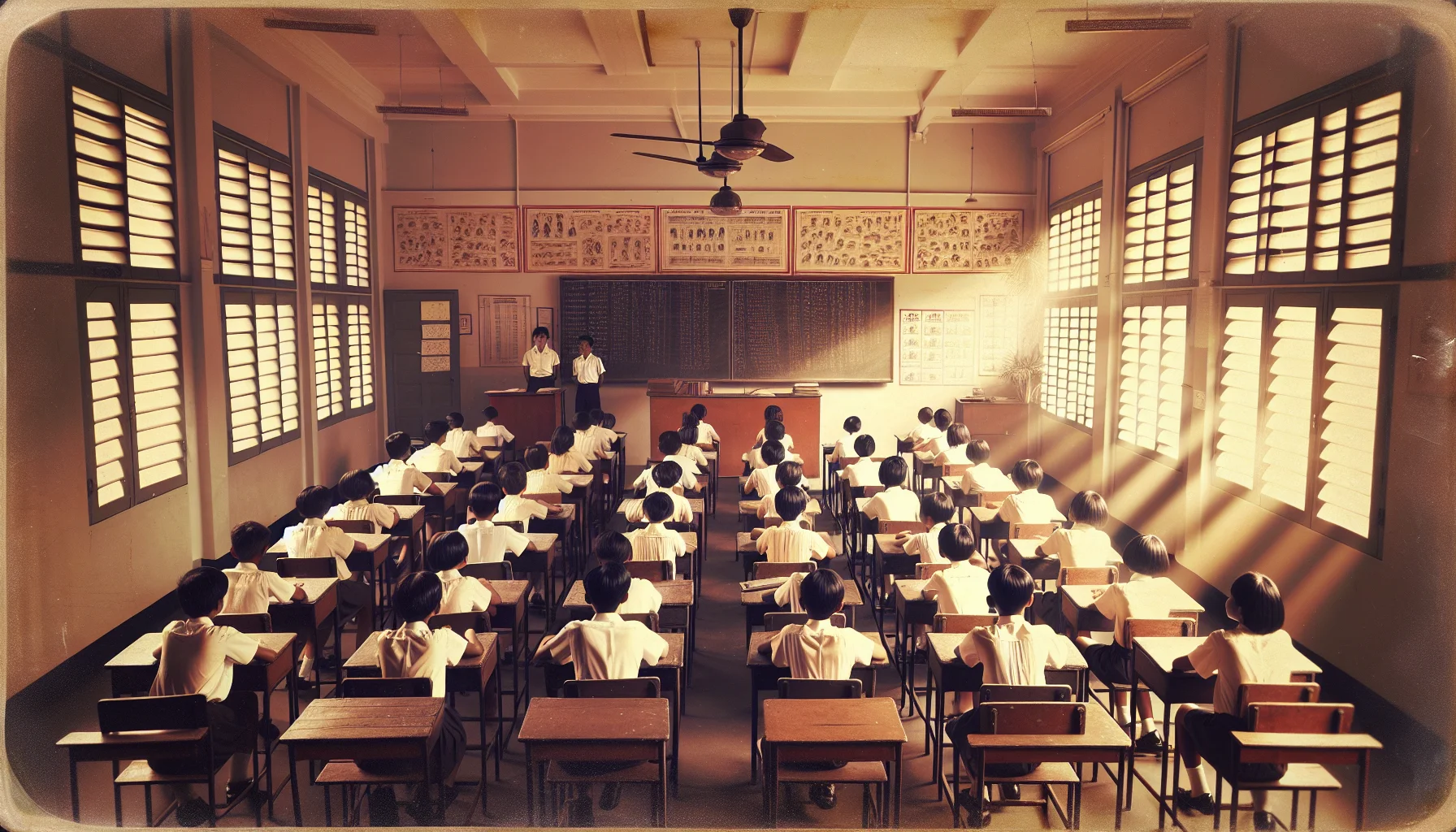

The school year in Singapore is over. Anthony and Ernest are home until January. Their results were good, so they will go to the two top classes. Our

school admits the top 80 students into two classes with a wider and deeper curriculum. So there will be forty students in a class. 40 is a good solid number. Jesus went to the dessert for 40 days,

Ali Baba had to deal with 40 robbers and it is just two short of the

answer to all question of the universe.

But 40 nine year old kids in one room deems me a little high. So I did a little research. Dr. Ng Eng Hen (Minister for Education)

quoted a study published by McKinsey in September 2007 entitled "How the world’s best performing school systems come out on top". According to his quote there seems to be no significant relation between class sizes and results (only 9 out of 112 studies found a positive effect). The key supposed to be the quality of the teachers. While I fully agree with the importance of teacher quality, I do have some doubt on the class size findings. The result could be a victim of a lack of

ceteris paribus: When the size of a class is reduced, more teachers are needed. Since more teachers are needed, less qualified teachers are hired. Less qualified teachers lower the results. It would be interesting to take 3 equally qualified and experienced teachers and let them teach 3 classes: one with 40 and two with 20 students each and then compare. I would want to make a bet here <g>. Of course that doesn't solve the "where are all the highly qualified teachers for all that many (small) classes" question. A

studyfocusing on 3rd grade entitled "Teachers’ Training, Class Size and Students’ Outcomes" and published in 2008 comes to a radical different conclusion:"

the effect of class size is substantial and significant, a smaller class size improves similarly all students’ reading test scores within a class". The study confirms that the teachers' training is equally significant.

There are quite some opinions out there:

19,

24,

25 (With a legal maximum of 33) or

35 . A very promising sounding

study by

Neville Bennettwas behind $$$. Bennett seems to be quite

an authority on the topic of learning. The question is

widely debated and I can't fend of that nagging feeling that most of the studies' results are subject to the

Experimenter's bias effect. I found evidence that two studies both quoted an earlier, third, study as evidence for their respective opposite conclusion. I've taught classes of different ages (12-70) and different sizes (3-30) myself and I don't think 40 is good for learning. So maybe Singapore's

outstanding results are the result of

world class tuition rather than than the school system.

The long-range thinking and individualistic type. They are especially good at looking at almost anything and figuring out a way of improving it - often with a highly creative and imaginative touch. They are intellectually curious and daring, but might be pshysically hesitant to try new things.

The long-range thinking and individualistic type. They are especially good at looking at almost anything and figuring out a way of improving it - often with a highly creative and imaginative touch. They are intellectually curious and daring, but might be pshysically hesitant to try new things.