In

Part 1 I discussed Elements vs. Attributes and the document nature of business vs. the table nature of RDBMS. In this installment I'd like to shed some light on abstraction levels.

I'll be using a more interesting examples than CRM: a court/case management system. When I was at lawschool one of my professors asked me to look out of the window and tell him what I see. So I replied: "Cars and roads, a park with trees, building and people entering them and leaving and so on". "Wrong!" he replied, "you see subjects and objects".

From a legal view you can classify everything like that: subjects are actors on rights, while objects are attached to rights.

Interestingly in object oriented languages like Java or C# you find a similar "final" abstraction where everything is a object that can be acted upon by calling its methods.

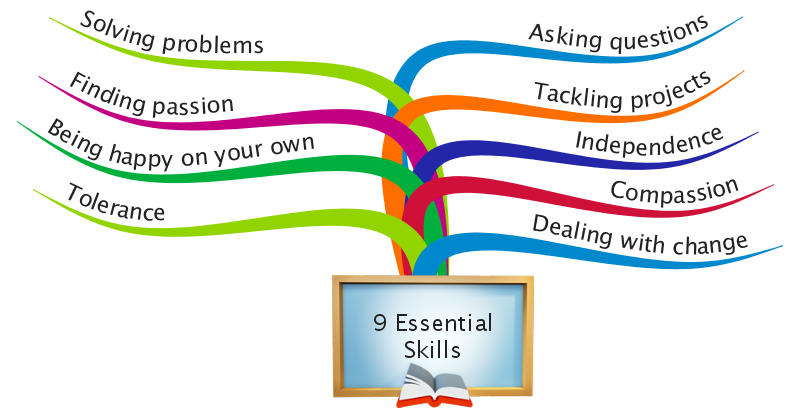

In data modeling the challenge is to find the right level of abstraction: to low and you duplicate information, to high and a system becomes hard to grasp and maintain.

Lets look at some examples. In a court you might be able to file a civil, criminal, administrative or inheritance case. Each filing consists of a number of documents. So when collecting the paper when doing your

contextual enquiry you end up with draft 1:

<caselist>

<civilcase id="ci-123"> ... </civilcase>

<criminalcase id="cr-123"> ... </criminalcase>

<admincase id="ad-123"> ... </admincase>

<inheritcase id="in-123"> ... </inheritcase>

</caselist>

(I'll talk about the inner elements later) The content will be most likely very similar with plaintiff and defendant and the representing lawyers etc. So you end up writing a lot of duplicate definitions. And you need to add a complete new definition (and update your software) when the court adds "trade disputes" and, after the

V landed, "alien matters" to the jurisdiction.

Of course keeping the definitions separate has the advantage that you can be much more prescriptive. E.g. in a criminal case you could have an element "maximum-penalty" while in a civil case you would use "damages-thought". This makes data modeling as much a science as an art.

To confuse matters more for the beginner: You can mix schemata, so you can mix-in the specialised information in a more generalised base schema. IBM uses the approach for

IBM Connections where the general base schema is

ATOM and missing elements and attributes are mixed in in a Connections specific schema.

You find a similar approach in MS-Sharepoint where a Sharepoint payload is wrapped into 2 layers of open standards: ATOM and

OData (to be propriety at the very end).

When we abstract the case schema we would probably use something like:

<caselist>

<case id="ci-123" type="civil"> ... </case>

<case id="cr-123" type="criminal"> ... </case>

<case id="ad-123" type="admin"> ... </case>

<case id="in-123" type="inherit"> ... </case>

</caselist>

A little "fallacy" here: in the id field the case type is duplicated. While this not in conformance with "the pure teachings" is is a practical compromise. In real live the case ID will be used as an isolated identifier "outside" of IT. Typically we find encoded information like year, type, running number, chamber etc.

One could argue, a case just being a specific document and push for further abstraction. Also any information inside could be expressed as an abstract item:

<document type="case" subtype="civil" id="ci-123">

<content name="plaintiff" type="person">Peter Pan </content>

<content name="defendant" type="person">Captain Hook </content>

</document>

Looks familiar? Presuming you could have more that one plaintiff you could write:

<document form="civilcase">

<noteinfo unid="AA12469B4BFC2099852567AE0055123F">

<created>

<datetime>20120313T143000,00+08 </datetime>

</created>

</noteinfo>

<item name="plaintiff">

<text>Peter Pan </text>

<text>Tinkerbell </text>

</item>

<item name="defendant">

<text>Captain Hook </text>

</item>

</document>

Yep - good ol'

DXL! While this is a good format for a

generalised information management system, it is IMHO to abstract for your use case. When you create forms and views, you actually demonstrate the intend to specialise. The beauty here: the general format of your persistence layer won't get into the way when you modify your application layer.

Of course this flexibility requires a little more care to make your application easy to understand for the next developer. Back to our example, time to peek inside. How should the content be structured there?